- US appeals court denied Anthropic’s emergency request to pause Pentagon designation

- Supply chain risk label remains in effect for Anthropic’s AI products

- Government interest prioritized over financial and reputational harm to company

- Pentagon contractors restricted from using Anthropic AI models

- Legal battle continues across multiple courts and legal tracks

Court Rejects Anthropic’s Emergency Motion

The US Court of Appeals for the District of Columbia Circuit has rejected an emergency request from Anthropic to pause the US Defense Department’s designation of the company as a national security supply chain risk.

A three-judge panel ruled that the balance of equities favored the government, stating that the need to manage AI technology during an active military conflict outweighed potential harm to the company. “In our view, the equitable balance here cuts in favor of the government,” the panel wrote. The ruling allows the Defense Department’s designation to remain in place while legal proceedings continue.

Impact of the Pentagon Designation

The designation labels Anthropic’s products as a “supply-chain risk to national security,” preventing contractors working with the Pentagon from using its AI models.

This is the first time such a designation has been applied to an American company. The decision could influence how technology firms interact with government demands related to national security. The court acknowledged that Anthropic is likely to suffer “some degree of irreparable harm” but emphasized that national security considerations take precedence.

Background and Ongoing Legal Dispute

The dispute originated from a July 2025 agreement between Anthropic and the Pentagon to approve its AI model Claude for use on classified networks. Negotiations later collapsed in February when the government sought broader usage terms, including unrestricted military applications. Anthropic maintained that its technology should not be used for lethal autonomous weapons or mass domestic surveillance.

In late February, US President Donald Trump ordered federal agencies to stop using Anthropic products, criticizing the company’s stance. Anthropic filed a lawsuit in March, calling the move an unlawful retaliation.

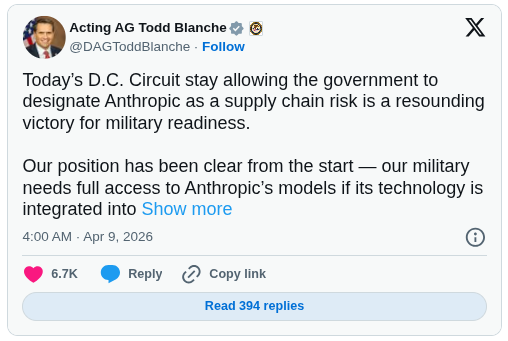

In a separate case, a California district court issued a preliminary injunction against the Pentagon’s actions, temporarily halting the directive. However, due to federal procurement law, the company must challenge the designation through multiple legal avenues, including the D.C. Circuit. Acting US Attorney General Todd Blanche described the ruling as a “resounding victory for military readiness,” emphasizing that operational control lies with the government rather than private companies. Recently, Anthropic launched Project Glasswing to strengthen global cybersecurity using advanced AI. The Claude Mythos Preview model has already identified thousands of high-severity software vulnerabilities. The initiative brings major tech firms together to proactively secure critical systems against emerging threats.